Why productivity claims from consultants and vendors are misleading your leadership. And what early data actually shows.

Developers have been using generative AI in various stages of the SDLC for a few years now, and with mixed results. Yet, claims of 30-60% developer productivity gains continue to circulate, especially in consulting narratives.

These numbers sound compelling, but they rest on shaky grounds: Despite widespread experimentation, only 26% of companies generate tangible value from AI at scale (BCG), and the considered metrics are often flawed.

Why is this dangerous?

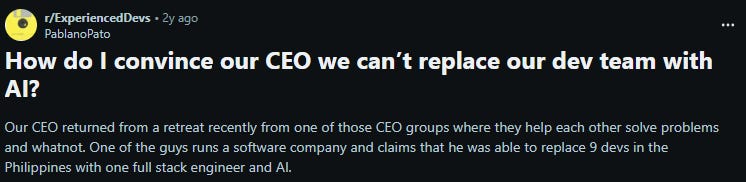

People, and especially non-technical managers, may draw the wrong conclusions, such as replacing half their team with AI agents:

I am currently conducting interviews on how organizations deploy developer experience initiatives, and some are confirming they are having challenging discussions with managers who believe they can save significant costs by replacing (not augmenting) developers with AI, inspired by questionable metrics.

But are they wrong?

Yes and no. Some of these studies were very well conducted, but on small, narrow tasks (e.g. a study on GitHub Copilot claiming 55.8% faster task completion). A McKinsey study similarly evaluated productivity from a “speed on task”-level perspective (e.g. documentation, coding, refactoring), without considering the impact on overall team throughput.

In practice, development work increasingly shifts from writing code to validating it, through code reviews, testing, and integration. Faster code generation does not remove this work; it redistributes it.

Nvidia claims their developers produce 3x the amount of code using Cursor, Microsoft and Google report that more than 25% of their shipped code is AI-generated. Obviously someone still needs to review that code and take ownership once it is deployed. This remains an open challenge, especially as large organizations continue to face significant incidents and regressions (e.g., AWS Outage). The DORA report found that delivery stability dropped by 7.2%. Similarly, getDX saw that “healthy” organizations report 50% fewer, while “unhealthy” ones report 200% more incidents.

These examples highlight the drawbacks of relying solely on output-driven metrics. Writing more code or documentation has always been a weak proxy for productivity, as it says little about quality or whether meaningful value was created.

So are there productivity improvements?

Quantifying productivity is notoriously hard, even before GenAI. One proxy that companies are applying are estimates of “time saved”. Survey participants reported average weekly time savings of ~4 hours (N=95’000, getDX), which is in line what I am hearing from Swiss companies who also surveyed their development teams. While this is far from the 30-60% improvements often claimed, but even a 5-10% estimated improvement is significant.

Very small teams working on new codebases may report higher gains, but their workflows – and lack of legacy complexity – are hardly comparable to those of established organizations.

At the same time, I am not convinced that “time savings” is the right term. The time is rarely eliminated; it is freed up and repurposed toward verification and validation tasks (such as code reviews), AI orchestration, and managing growing technical debt.

Generative AI is therefore shifting development work from crafting toward orchestrating and reviewing. Organizations can positively influence outcomes by providing appropriate tooling, integrating AI effectively into workflows and processes, investing in training and guidelines, and actively managing both technical and cognitive debt.

Comments are closed.