Why nearly half of developers *actively* distrust AI tools — and what the research says actually changes that.

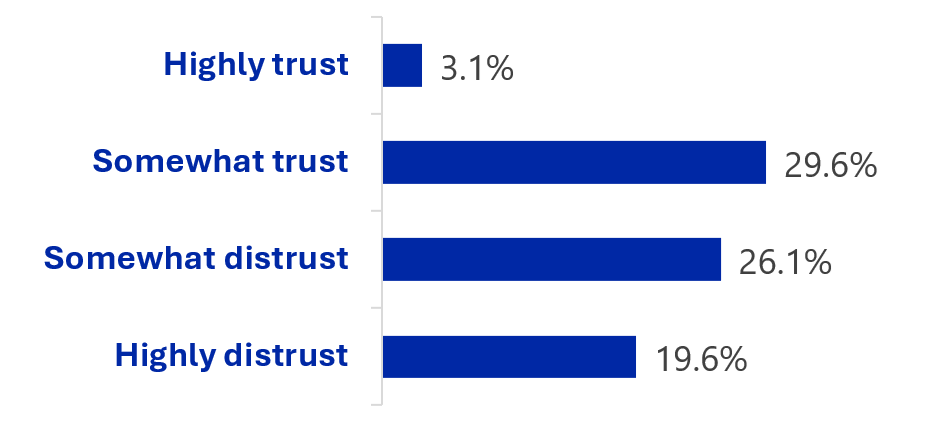

According to the Stack 2025 Overflow Developer Survey with over 33,000 respondents, only 3% highly trust the accuracy of AI tools, while 46% actively distrust them. This isn’t a niche concern, it’s a large part of the developer population, and it not only impacts adoption of AI tools, but how they impact productivity.

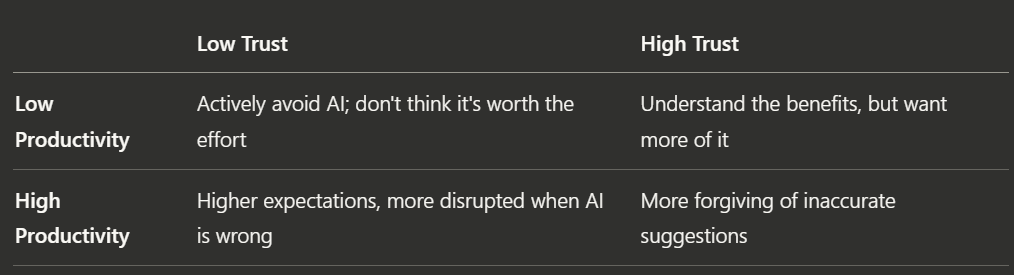

Through a log-data analysis, Google’s research teams confirmed that developers who frequently accepted AI suggestions submitted more code changes and spent less time seeking information, impacting various productivity-related metrics. Inversely, low trust didn’t just slow adoption, but also experience with AI tools. The researchers found that high-productivity developers with low trust were more disrupted when suggestions were wrong, as they’d raised their baseline expectations.

So what shapes trust?

Another Google study analyzed 12 developer interviews and 1 million AI code suggestions to identify what predicts whether a developer accepts a suggestion. Three categories emerged:

- Suggestion characteristics: Model quality was the strongest predictor. Mid-length suggestions were accepted more than very short/long ones. Suggestions appearing late were less likely to be accepted.

- Developer characteristics: Language expertise was a dominant factor, and familiarity with the AI tool helped.

- Development context: Acceptance of suggestions was lower for writing tests, bug fixes or small changes. These contexts are more constrained and developers already know what they want.

Developer’s don’t trust what they can’t judge; which is why language expertise is a precondition for trust.

What increases trust?

The research pointed three aspects:

- Unsurprisingly, investing into model quality helps.

- Surfacing model confidence helped calibrate developer’ trust more accurately.

- Personalization. After Google invested into adapting AI tools’ behaviors with preferences, such as suggestion frequency, length and file-level toggling; they saw 9% of developers who previously disabled AI features re-enabled them. When tool builders don’t support personalization out of the box, a lot of it can be achieved through instructions (e.g. a CLAUDE.md file).

As many developers who over-rely on AI write less secure code, I don’t propose to maximize trust. Trusting AI output blindly is dangerous. Instead, I propose to invest into appropriate levels of trust; through tool choice, better tools, personalization and training.

Comments are closed.