GenAI coding tools were supposed to free up mental capacity. Offload the boilerplate, the syntax, the repetitive work, and let developers focus on what matters. This goal was only achieved partially.

The question isn’t whether AI affects cognitive load. It’s why and how.

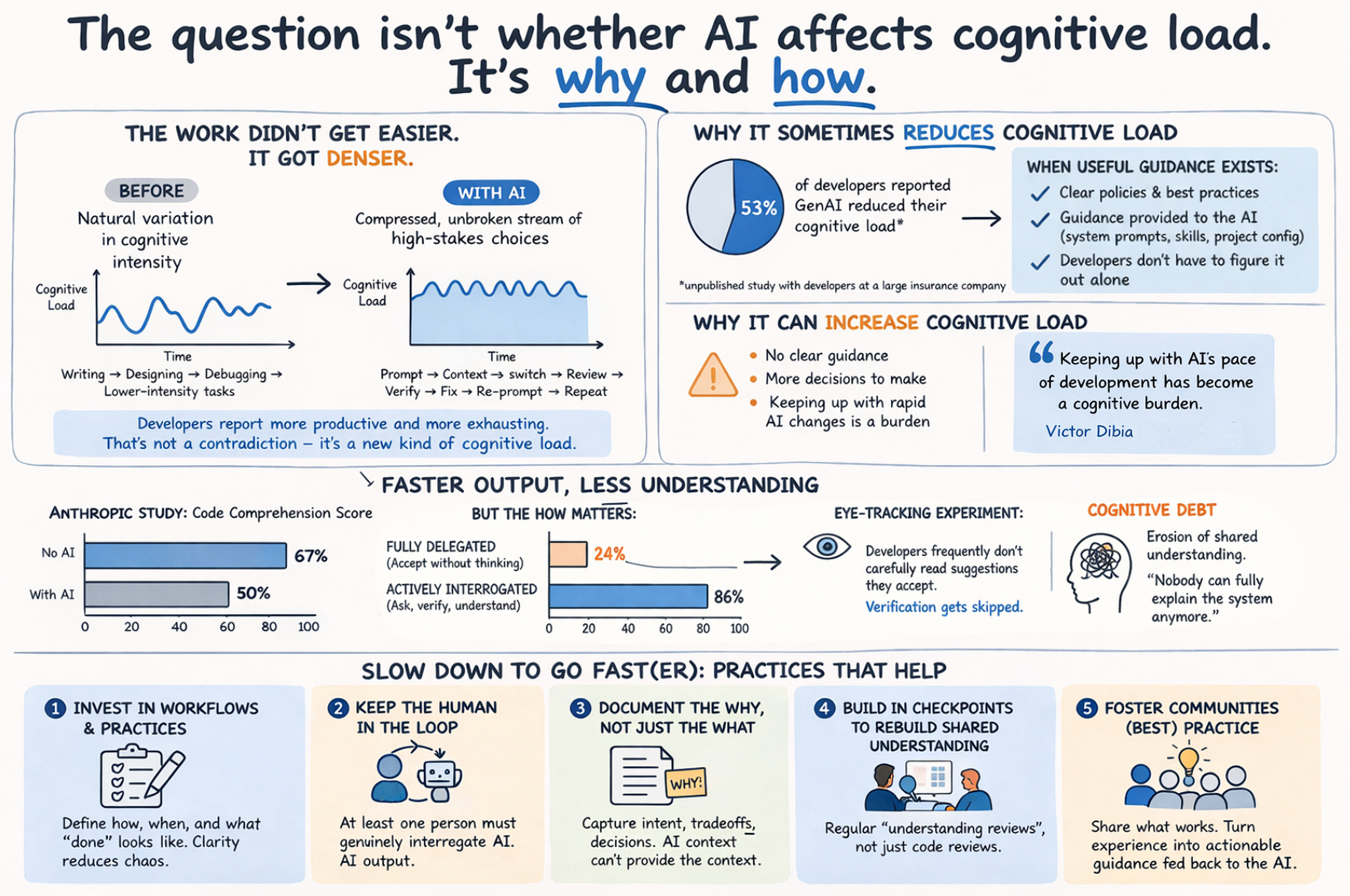

The work didn’t get easier. It got denser.

With the actual programming now handled by an agent, what remains are the hard decisions. System design, architecture tradeoffs and whether the output actually meets the customer’s needs and quality expectations. You prompt, context-switch to another task while the agent runs, come back to review and verify, catch issues, re-prompt. Repeat. The natural variation in cognitive intensity that used to structure a developer’s day gets compressed into an unbroken stream of high-stakes choices.

Developers report this as simultaneously more productive and more exhausting. That’s not a contradiction – it’s a new kind of cognitive load.

In a recent unpublished study with developers at a large insurance company, only 53% reported GenAI reduced their cognitive load, fewer than one might have expected given the promises. The more interesting finding was why – cognitive load tended to reduce when useful internal guidance existed: clear policies and best practices around how and when to use AI, which were also provided as context to the AI itself through tools like system prompts, skills and project-level configuration. Where that guidance was absent, developers were left to figure it out themselves, adding yet another layer of decisions to an already dense workday.

Victor Dibia (Microsoft Research) describes how keeping up with AI’s pace of development has itself become a cognitive burden. As one example: Anthropic alone now ships multiple model updates per day.

Faster output, less understanding

Here’s where it gets more concerning. An Anthropic study with 52 developers found that when using AI, they scored significantly lower on code comprehension than those without: 50% vs. 67%. But the aggregate hides a more important finding: the how matters enormously. Developers who fully delegated scored as low as 24%. Those who actively interrogated the AI’s output scored 86% – better than the no-AI group.

A controlled experiment at our lab using eye-tracking found that half of AI code suggestions went entirely unseen by developers – suggesting that verification is far from systematic, even when suggestions are accepted.

The long-term impact on the team and codebase has a name: cognitive debt. As Margaret-Anne Storey describes it, it’s the erosion of the shared mental model of what the software does and why. Unlike technical debt, it doesn’t trigger a failing build. It shows up later, quietly, when nobody can safely make a change because nobody can fully explain the system anymore.

Slow down to go fast(er)

AI shifts cognitive load – from writing to orchestrating, verifying, and understanding. The shift can be net positive. But it requires actively managing what you lose in the trade.

A few things that actually help:

- Invest in workflows and practices deliberately. AI tools evolve faster than the practices around them. Define how your team uses AI – when to prompt and when not to, how to review output, what counts as “done” and who owns what is shipped. Without that structure, every developer improvises, and the cognitive overhead compounds.

- Keep the human in the loop. Require that at least one person on the team genuinely interrogates AI output rather than passively accepting it. Responsibility for what ships doesn’t transfer to the model!

- Document the why, not just the what. AI can generate inline documentation on the fly. What it can’t capture is intent – why a decision was made, what was considered and rejected. That has to come from you.

- Build in checkpoints to rebuild shared understanding. Regular sessions where the team walks through what was built and why – not just code reviews, but understanding reviews. If only one person can explain a module, you have a problem.

- Foster communities of (best) practice. The burden of staying current is too large for any individual. Teams that share what works – and distribute that knowledge as actionable internal guidance, fed back into their AI tooling – are the ones that turn cognitive load from a risk into an advantage.

Comments are closed.