A bad metric becomes a target, the bill comes due, and we end up paying for it twice – once in dollars, once in degraded service.

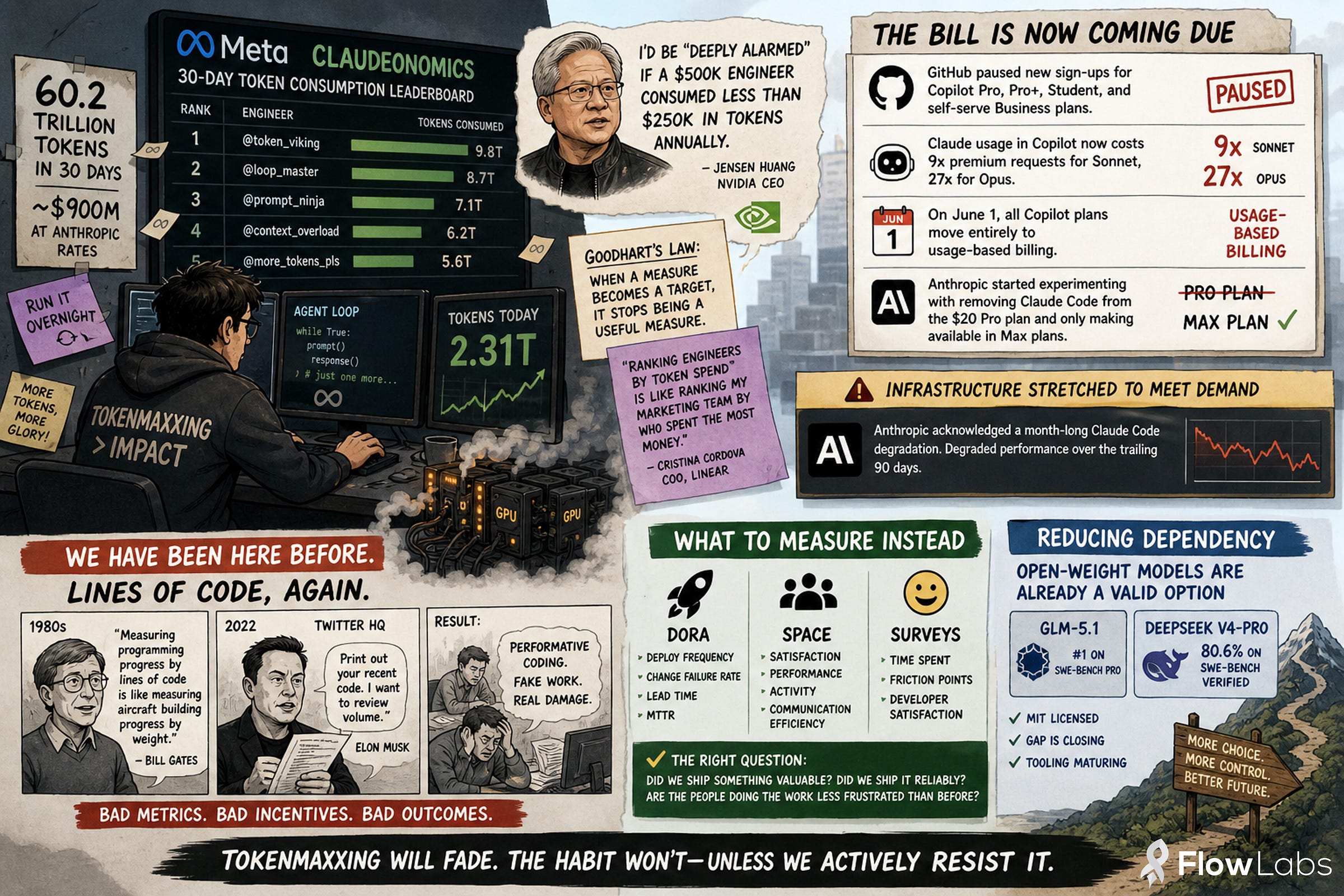

At Meta, engineers reportedly burned through 60.2 trillion AI tokens in 30 days. At Anthropic API rates, that’s around $900M. The cause: an internal “Claudeonomics” leaderboard ranking engineers by token consumption, thereby encouraging them to inflate their numbers. Meta isn’t alone – similar AI usage dashboards currently run or did run at Microsoft, Salesforce, Shopify, and others. Meta has since taken the leaderboard down.

Nvidia CEO Jensen Huang, adding fuel to such a bad metric, declared that he’d be “deeply alarmed” if a $500K engineer consumed less than $250K in tokens annually. Not without his own interest in mind – Nvidia sells the GPUs.

We have been here before.

Lines of code, again!

Lines of code as a productivity metric was a known-bad idea by the late 1980s. Bill Gates is often credited with the line “measuring programming progress by lines of code is like measuring aircraft building progress by weight”. Elon Musk infamously revived it at Twitter in 2022, asking engineers to print out their recent code so he could review volume personally. It went about as well as you’d expect.

Tokens are the same mistake with worse economics. Goodhart’s Law: when a measure becomes a target, it stops being a useful measure. Linear’s COO Cristina Cordova put it cleanly: “Ranking engineers by token spend is like me ranking my marketing team by who spent the most money”.

The bill is now coming due

I’ve been arguing for a while that the flat-rate AI subscription model is unsustainable. In the last few weeks it started to break:

- GitHub paused new sign-ups for Copilot Pro, Pro+, Student, and self-serve Business plans

- Claude usage in Copilot now costs 9x premium requests for Sonnet, 27x for Opus. GitHub frames the change as reflecting actual inference cost differences between models. On June 1, all Copilot plans move entirely to usage-based billing.

- Anthropic started experimenting with removing Claude Code from the $20 Pro plan and only making available in Max plans.

There are two opposing forces on price. Economies of scale and improving inference efficiency will push costs down. But OpenAI, Anthropic, and others have collectively invested hundreds of billions in compute and want returns – with gains. I am expecting that a year from now, we’ll be paying 10x what we pay today with these heavily “subsidized” plans. The classic Silicon Valley playbook applies here too: subsidize aggressively, capture the market, raise prices once everyone is dependent.

Tokenmaxxing incurs further costs

Anthropic has been visibly compute-constrained. Their status page shows degraded performance over the trailing 90 days. In a recent postmortem, Anthropic acknowledged a month-long Claude Code degradation and admitted infrastructure has been “stretched to meet” demand. When engineers run agents in loops overnight to game a leaderboard, that capacity isn’t available for someone doing actual work.

In addition, maximizing token spends is wasting GPU cycles and with it, energy. This behavior is also deeply unsustainable and unethical.

What to measure instead

We don’t need new metrics for the AI era. We need to apply the existing (good) ones.

DORA, SPACE, and periodic surveys on developer satisfaction, time spent and friction points all already capture what matters: did we ship something valuable, did we ship it reliably, are the people doing the work less frustrated than before? The right question isn’t “how much AI did we use”. It’s whether friction went down, satisfaction went up, and the right things got built.

Reducing dependency

The good news: open-weight models are already a valid option. GLM-5.1 currently tops SWE-Bench Pro ahead of Claude Opus 4.6, and DeepSeek V4-Pro hits 80.6% on SWE-Bench Verified – both MIT-licensed. However, self-hosting still requires GPU-enabled infrastructure, and benchmarks don’t fully capture day-to-day developer experience. But the gap is closing month by month, and tooling around inference, fine-tuning, and serving keeps maturing. Teams that start experimenting with open-weight models now will be in a much stronger position the next time vendors increase prices again.

Tokenmaxxing will fade – but the habit of reaching for the easiest metric instead of the right one won’t, unless we actively resist it.

Comments are closed.